Caplin Platform Deployment

This page gives recommendations on how to deploy Caplin Platform in a typical live environment.

Deployment Architectures

Caplin Platform can be deployed in several different architectures to support various security and resilience requirements. The following sections describe each of these architectures, explaining where the individual Caplin Platform components are situated within them:

Security Model

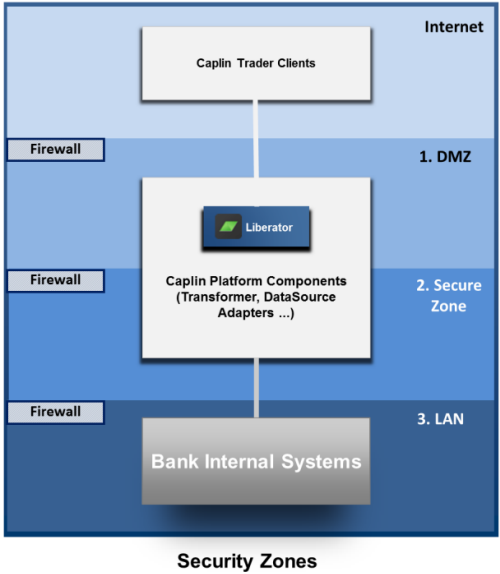

Because end-users can access the Bank’s data feeds and trading systems from the Internet, the Caplin components and Bank systems should be allocated to networks that are secured behind appropriate firewalls. The following diagram shows a standard 3 tier security model, based on a security zoning of firewalls and networks. The zones have increasing levels of security from the top to the bottom of the diagram.

The Internet is the publicly accessible zone and is insecure. The client applications, such as applications implemented using Caplin Trader, typically execute in this zone.

-

Zone 1 is the "Demilitarized Zone" (DMZ), housing the Bank’s servers that interface with the Internet, and Caplin Liberator servers. This zone sits behind a firewall, but the servers are addressable from the Internet Zone.

-

Zone 2 is the Secure Zone. This sits behind a further firewall and the servers in it are on a separate sub-network to servers in the DMZ. This zone contains the rest of the Caplin Platform components. It also contains the application server(s) that serve the Bank’s client portal and Caplin Trader clients.

-

Zone 3 is the most secure of the zones; it is on a separate network (LAN), only accessible from the Secure Zone through another firewall. This is where the Bank’s core internal systems that interact with Caplin Platform are located.

Failover Legs

Caplin Platform is typically deployed with multiple component instances to provide resilience against hardware, software, and network failures. To help achieve this, the hardware and software components can be arranged in processing units called "failover legs". In normal operation, all the components in a single failover leg – typically Liberator, Transformer, Integration adapters, and the Bank’s internal systems – work together to provide the system’s functionality. If a component fails (or a connection to it, or the machine on which it runs), the operations provided by that component are taken up by an alternative copy of the component running in a different failover leg. This transfer of operation is called "failover".

Single Leg Deployment (no Failover)

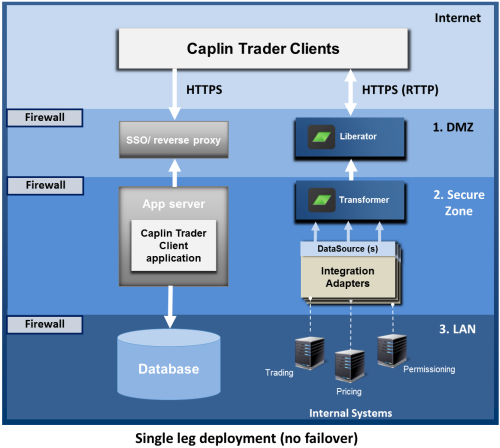

The following diagram shows a single leg deployment of an installation. This is the simplest configuration and does not provide failover if a component, machine, or network connection should fail.

Assuming the Caplin Trader client executes in a browser, it is deployed on an application server behind the Bank’s existing single sign-on (SSO) system (see Authenticating Client Sessions). Clients access the server through HTTPS, and after the user has signed on, the client application is downloaded to the browser from the application server. The application may use a database for persistent storage of data, such as user-modified layouts. The Caplin Platform server components (Liberator, Transformer, and Integration adapters) interact with the client in the browser; streaming price information to the application in real-time, exchanging trade messages, and supplying permission information.

Security Zones

The diagram shows how components can be deployed to conform to the security model previously described (see Security Model):

-

Being internet facing, Liberator is deployed behind an internet firewall within a DMZ.

-

Transformer and the Integration adapters reside in the Secure Zone.

-

The database that is accessed by the application server, and the Bank’s internal systems for pricing, trading, granting user permissions, and so on, reside in a highly secure LAN environment behind an additional firewall.

The arrows show the direction in which connections between components are initiated. The directions are typically determined by the security model. In this example, as the Liberator is in the DMZ, it is not allowed to initiate the connection to the Transformer in the Secure Zone, as this would violate the security regime; the connection attempt would be denied by the firewall. Instead, the Transformer initiates the connection, which the Liberator listens for. Similarly, the Integration adapters in the Secure Zone are not allowed to initiate connections to the Bank’s highly secure internal systems in the LAN zone; instead they listen for connections from the internal systems.

| When two Caplin Platform components communicate with each other, to meet your particular security restrictions, you can configure which component initiates the connection. |

Connections

When the Caplin Trader client executes in a browser, Liberator listens on the HTTPS port for client traffic (using the RTTP protocol). Connections between Liberator, Transformer and Integration Adapters use the DataSource protocol, based on TCP/IP. Connections between Integration Adapters and the internal systems may use the protocols of standard market data platforms (such as Refinitiv’s RTDS), or they can use other proprietary protocols. The connection between the application server and the database is typically through JDBC.

| For maximum security, the Caplin Trader clients should connect to the application server and the Liberator via HTTPS, not via HTTP. |

DNS configuration

When the Caplin Trader client is browser-based, the Liberator URL and the application server’s URL must share a common domain. For example, if Liberator is hosted at liberator.mydomain.com, the application server may be hosted at appserver.mydomain.com but not at appserver.myotherdomain.com.

Multi-leg Deployment and Resilience

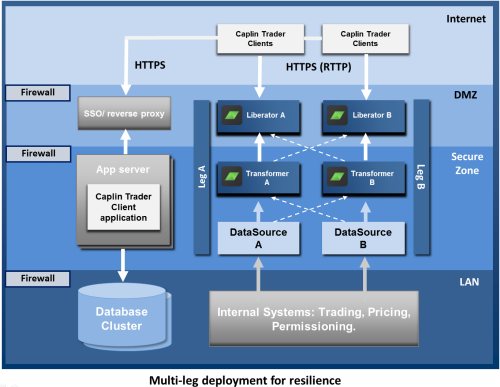

To ensure resilient operation, Caplin Platform should be deployed using multiple component instances across multiple failover legs, as shown in the following diagram.

The diagram shows a single geographical site running Caplin Platform and associated software, within the security regime described in the Security Model section. At startup, Legs A and B are independent of each other, and are used to balance the transaction load. Each leg is configured the same way and is connected to the same internal systems for pricing, trading, and permissioning. If a Caplin Platform component detects a significant reduction in quality of service during operation, it automatically instigates failover to an alternate instance of the component – the corresponding instance in the other leg. For example, if Liberator A fails, the clients connected to it fail over to Liberator B.

Failover connections are configured for each component instance. To meet the requirements of the security regime (Liberators cannot initiate connections to Transformers) but still allow failover, and to reduce the time taken to fail over, the connections between pairs of failover components are made in advance when the components start up, rather than during a failover event. The dotted arrows show connections that are made in advance but are not active (transmitting data) until a failover occurs. For example, in the diagram, when Transformer A starts up, it connects to the failover Liberator B in addition to Liberator A (the Liberator it normally talks to).

For more detail on how failover works, see Failover Scenarios.

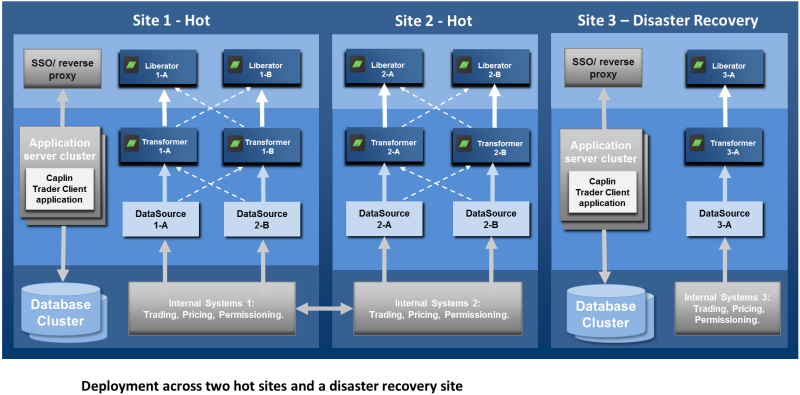

Cross-site Deployment

For maximum resilience, both Caplin Platform and the application server components should be deployed across multiple sites. The following diagrams illustrate an example deployment across 3 geographically distinct sites. Sites 1 and 2 are "hot" sites – that is, they are live installations. Site 3 is for disaster recovery purposes; if the whole of Site 1 or Site 2 should become unavailable, the software at Site 3 is started up and the client connections that would have been handled by the now unavailable site are routed to Site 3 instead.

| To reduce transmission latency between clients and the Liberator, Caplin recommends establishing a hot site within each centre of geographic client density. For example Site 1 could be located in London, and would handle connections and transactions from clients in Europe, and Site 2 could be located in New York, to handle connections and transactions originating in North America. See Deployment across a WAN. |

Note that each hot site has two failover legs to handle failure of individual components within the site (see Multi-leg Deployment and Resilience). Disaster recovery sites are set up in exactly the same way as hot sites, and may also use multiple failover legs as required. You may want to set up more than one disaster recovery site. Although there are no technical differences between a disaster recovery site and a hot site, the software license fees for the disaster recovery site may be discounted provided the software is only operational when one of the hot sites is not available to end-users. The failover from a hot site to a disaster recovery site is typically initiated manually.

Deployment across a WAN

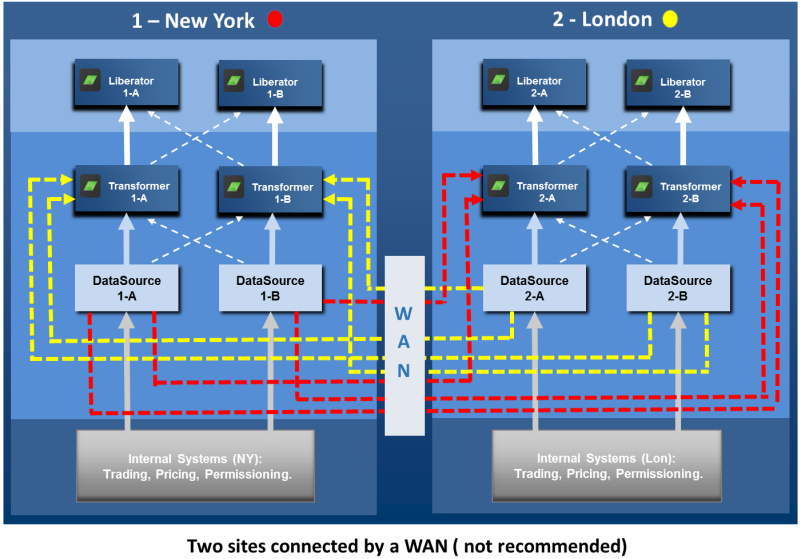

You may need to deploy your Caplin installation across several sites and share data across those sites. For example, you could have a site in New York serving North American FI customers, and a site in London serving European FI customers. The Bank’s pricing system in New York supplies US Bond prices, and the pricing system in London supplies European bond prices. Customers in both regions wish to see and trade both US and European bonds, so pricing information must be exchanged between the two sites over a relatively slow wide area network (WAN). The following diagram shows an obvious, but not recommended, way to do this. In this deployment, every Pricing Integration Adapter is connected to every Transformer, so that every Transformer can receive prices for all instruments.

However, this approach is not recommended for the following reasons:

-

It wastes communication bandwidth. Assume the Transformers at a site are both active and so share the connection and traffic load. Each such Transformer subscribes to instruments in isolation from the other Transformer, even when some or most of the subscriptions are in common. When such subscriptions are for instruments whose prices are supplied from the site in the other regional location, the price updates are sent across the WAN twice, once for each subscribing Transformer. This wastes bandwidth on the slowest communication link in the network, and can result in end-users experiencing high latency on updates.

-

The connection topology is complex.

-

The sites are too interdependent. Changes in one site, such as adding another pricing Integration adapter, affect the other site.

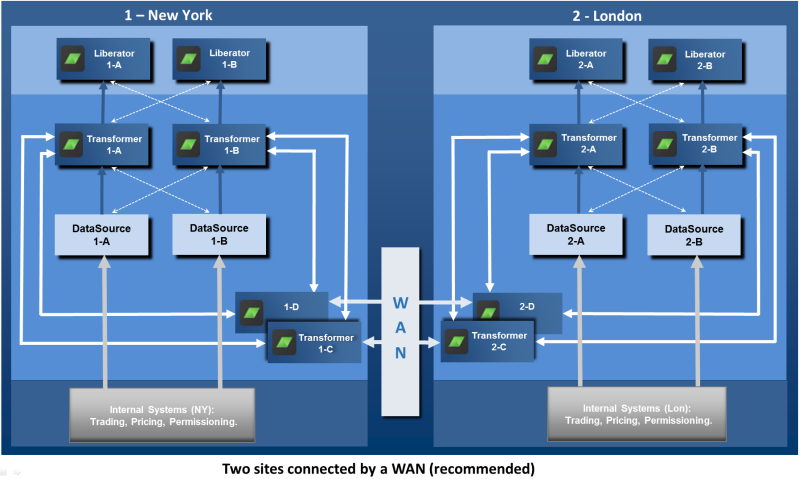

These problems become more acute as more regional locations are added. For example, adding a third site (Tokyo) increases the number of Transformer to Integration Adapter connections from 8 to 36. The following diagram shows a recommended approach to connecting regional sites:

Each site has an additional Transformer: '1-C' in New York and '2-C' in London. The sole function of this Transformer is to route subscription requests and price data updates for non-local instruments to the appropriate remote site, where they are received by the corresponding Transformer at the remote site and passed on to the relevant Integration Adapter. This arrangement has several advantages:

-

Traffic on the WAN is much reduced, and scales more linearly as further regional sites are added.

-

The connection topology is much simpler.

-

Local changes can be made at one site without affecting the other regional sites.

-

Consequently, it is much easier to add another regional site.

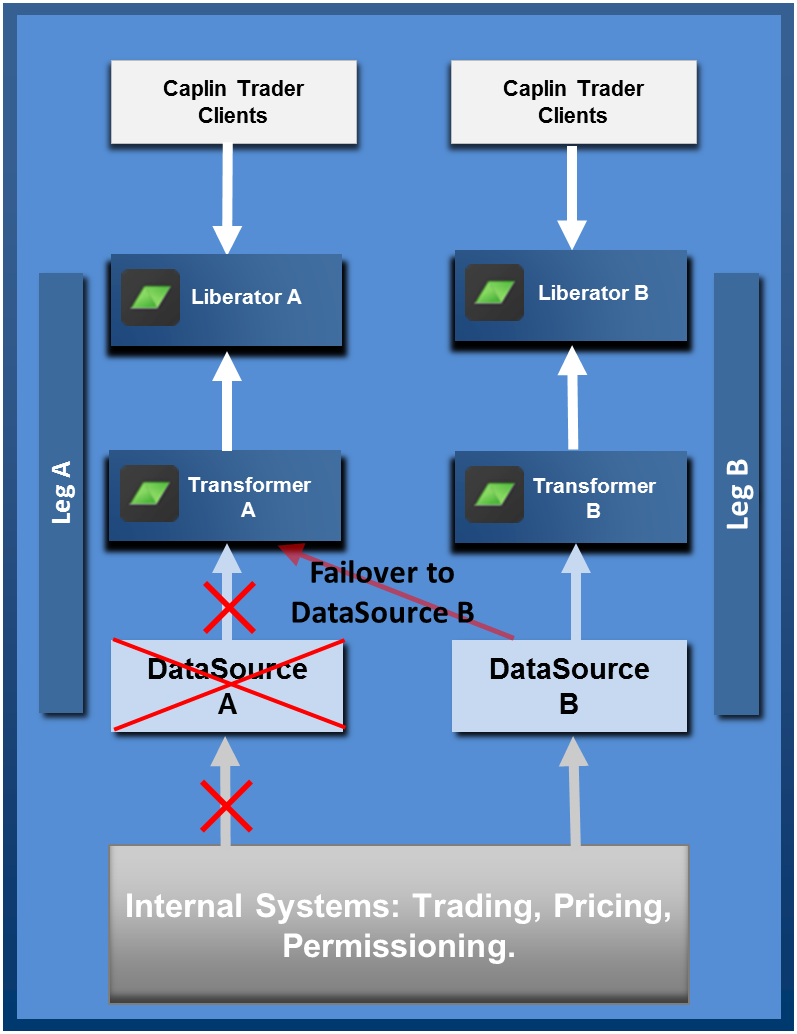

Failover Scenarios

When a component fails, Caplin Platform will react to maintain quality of service on each failover leg. The following scenarios show how failover is handled for each component type.

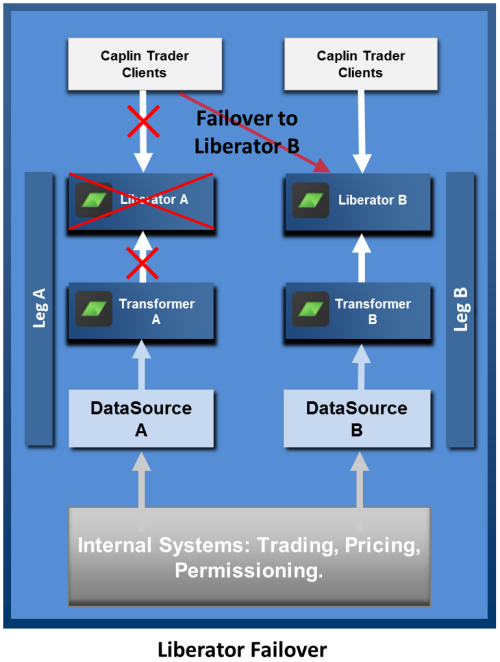

Liberator Failover

On loss of a Liberator instance, or loss of connection to the Liberator, Caplin Trader clients automatically connect to an alternate Liberator instance. The failover information is configured within the StreamLink library built into the client. When the client connects to the alternate Liberator, all previous instrument subscriptions and trade channels are restored.

The end-user does not have to explicitly log in to the alternate Liberator; this happens automatically in the background using a KeyMaster token hosted by the application server. For more information see Authenticating Client Sessions.

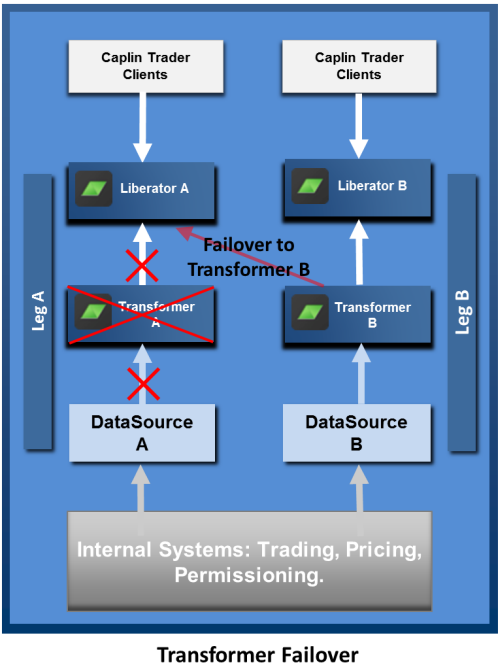

Transformer Failover

On loss of a Transformer instance, or loss of connection to the Transformer, the connected Liberators use failover connections to maintain quality of service. Clients remain connected to the same Liberator instance, so the failover is not apparent to them.

A Liberator’s subscriptions to streaming data are automatically reinstated when the Integration Adapter (DataSource application) that supplies this data (Transformer A in this example) fails over. For example if Liberator A subscribes to 5,000 streamed instruments served by Transformer A, and this Transformer becomes unavailable, Liberator A automatically resubscribes to these instruments on Transformer B. For simplicity of presentation, the diagram implies that before the failover, Liberator A has only subscribed to instruments from Transformer A, and after failover it requests all those instruments again from Transformer B. In reality, Liberators and Transformers are typically set up to balance subscriptions across all the Integration adapters, which reduces the number of subscriptions that have to be switched over when a failover occurs.

Integration Adapter Failover

On loss of a DataSource (Integration Adapter) instance, or loss of connection to the DataSource, the connected Transformers use failover connections to maintain quality of service. Clients remain connected to the same Liberator instance, so the failover is not apparent to them.

A Transformer’s subscriptions to streaming data are automatically reinstated when the Integration Adapter application that supplies this data (Integration Adapter A in this example) fails over. For example if Transformer A subscribes to 5,000 streamed instruments served by Integration Adapter A, and this Integration Adapter becomes unavailable, Transformer A automatically resubscribes to these instruments on Integration Adapter B. For simplicity of presentation, the diagram implies that before the failover, Transformer A has only subscribed to instruments on Integration Adapter A, and after failover it requests all those instruments again from Integration Adapter B. In reality, Liberators and Transformers are typically set up to balance subscriptions across all the Integration Adapter, which reduces the number of subscriptions that have to be switched over when a failover occurs.

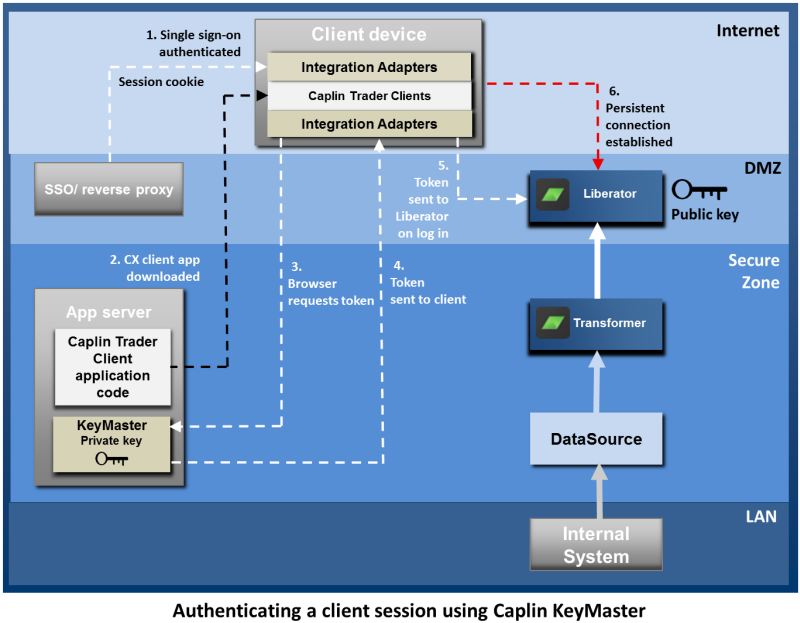

Authenticating Client Sessions

Client sessions should be authenticated through the Bank’s existing single sign-on (SSO) system. Once an end-user has signed on, the Caplin Trader client logs the user on to the Liberator (via the StreamLink library built into the application) This can be done automatically using Caplin KeyMaster. KeyMaster is software that integrates Liberator with an existing SSO system, so that end-users do not have to explicitly log in to the Liberator server in addition to logging in to the Bank’s single sign-on server. The following diagram shows the role of each component in handling sign-on and authentication of client sessions, and shows the main steps of the authentication process, assuming that KeyMaster is being used. It assumes the client runs in a browser.

The end-user signs on to the Bank’s portal site. The sign-on process is handled by the Bank’s existing SSO system, which sends a session cookie back to the browser:

-

The end-user navigates to the Caplin Trader client URL protected by the SSO, and downloads the client application.

-

The client application requests a user credentials token from the KeyMaster running on the application server. This request is secured by the SSO.

-

KeyMaster generates a signed user credentials token using a private key and sends this to the client application.

-

The browser uses the token to log into Liberator.

-

Liberator use the public key to verify the signature in the token. If the verification succeeds, the Liberator sets up a persistent connection to the client application.

Note that KeyMaster tokens may only be used once and expire after a configured timeout.

If Liberator fails over, repeated authentication against the SSO is not required; instead, the client requests a new KeyMaster token [steps 2-5] before logging into the alternate Liberator instance. For more information on client authentication using KeyMaster, see the KeyMaster Overview.

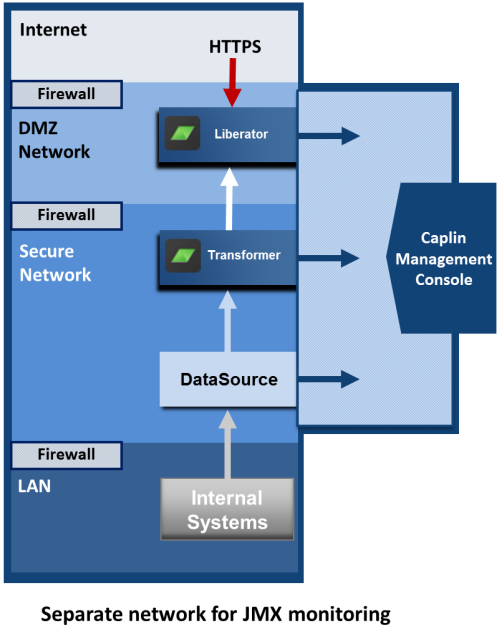

Deploying the Caplin Management Console (CMC)

The Caplin Management Console (CMC) allows you to monitor the behavior and performance of all Caplin Platform components including Liberator, Transformer, and Integration Adapters. The CMC establishes separate JMXTM connections to each Caplin Platform component. Each server component listens on an RMI registry port and an RMI client port. The JMX runtime uses a static JMX service URL to perform a JNDI lookup of the JMX connector stub in the RMI registry. Authentication of CMC sessions follows the same principles as client session authentication (see Authenticating Client Sessions). First the CMC requests a KeyMaster credentials token from the application server, then it uses the token for secure login to Liberator. The security implications of connecting a monitoring device directly into the live business data networks should be considered. Caplin Platform allows you to run your monitoring application on a completely different network, should this be a security requirement; this is shown in the following diagram:

In the diagram, the Caplin Platform components and internal systems are on separate networks and communicate across firewalls. The monitoring connections (RMI Registry and RMI Client) for Liberator, Transformer, and the Integration Adapter connections, are to a Monitoring Network which is completely separate from the other networks. The Caplin Management Console is also connected to the Monitoring Network.